“You Can’t Trust Anything”: How Deepfakes Are Breaking Enterprise Verification Models

Security models are no longer enough as multi-modal attacks overwhelm traditional controls, forcing a rethink of enterprise trust systems.

Security models are no longer enough as multi-modal attacks overwhelm traditional controls, forcing a rethink of enterprise trust systems.

Confluent deal highlights IBM’s focus on streaming data infrastructure to support AI deployment, governance, and hybrid cloud integration.

Project SnowWork introduces tooling to move AI from experimentation to execution, targeting enterprise-wide adoption and measurable ROI.

The Promptfoo deal underscores the importance of model evaluation, red-teaming, and reliability in scaling enterprise AI deployments.

Hyundai and Kia will integrate NVIDIA DRIVE to support scalable autonomous systems, from ADAS to robotaxi development.

New partner program from Anthropic funds training, technical support, and go-to-market collaboration to accelerate enterprise adoption of Claude AI.

Friday, 13 March 2026 Enterprise AI Governance & Security Would your current AI governance framework survive a real audit, a regulatory inquiry, or an agentic system going off-script at machine speed? Across six sessions on the AI-360 BrightTalk channel, practitioners from Google, PayPal, IBM, Crown Cards, Santa Clara University School

Google, PayPal, IBM, and beyond tackle AI governance, MCP security, and agentic risk — on demand via the AI-360 BrightTalk channel.

Security models are no longer enough as multi-modal attacks overwhelm traditional controls, forcing a rethink of enterprise trust systems.

MCP is rapidly transforming how AI agents interact with enterprise systems, opening up a new class of supply chain, identity, and governance risks that security teams can’t ignore.

Hefty cash burn threatens OpenAI’s longevity in the face of self-funded competitor.

Google DeepMind CEO warns that defensive systems must outpace AI-powered attack vectors as AGI approaches.

From the EU AI Act to cyber policy wording, panelists examined how emerging regulation and insurance structures intersect with enterprise AI deployment.

![Emotional Perception AI Limited v. Comptroller General [2026]](https://images.unsplash.com/photo-1593115057322-e94b77572f20?crop=entropy&cs=tinysrgb&fit=max&fm=jpg&ixid=M3wxMTc3M3wwfDF8c2VhcmNofDJ8fGdhdmVsfGVufDB8fHx8MTc3MDgzNDQzN3ww&ixlib=rb-4.1.0&q=80&w=1200)

Supreme Court allows appeal in Emotional Perception AI v. Comptroller General, mandating EPO-aligned test for computer-implemented inventions under UK law.

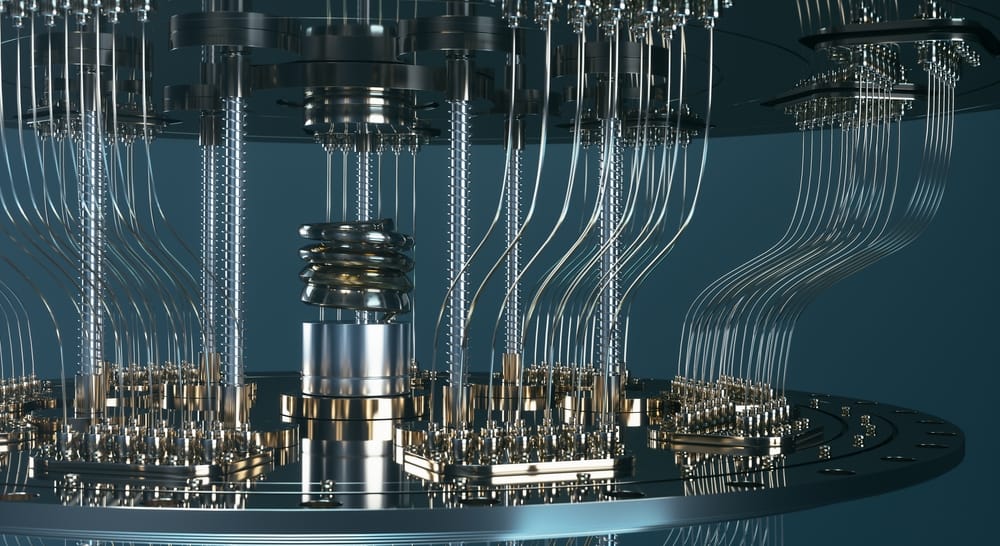

As GenAI scales across enterprises, quantum advances are compressing security timelines, challenging encryption lifetimes, governance models, and breach assumptions.

Under a $151 Billion SHIELD contract, IBM will bring governed, interoperable, mission-grade AI to accelerate threat detection and response.

In parallel to its existing inquiry, the European Commission has launched a new investigation into how risks are assessed and mitigated in connection with the deployment of Grok’s functionalities in X.

IBM’s Cost of a Data Breach Report 2025 reveals faster detection offsets rising AI-driven attacks, though US breach costs hit a record high.

Experts discuss the practical steps organizations must take to secure AI, protect data, and operationalize responsible deployments.

Alan Turing Institute's 2024 lecture series explores AI's impact on democracy, addressing deepfakes, bias, and trust in three expert-led discussions.

OpenAI's SearchGPT prototype combines AI models with web info to provide quick answers and clear source attribution, currently in limited testing.

The AI agent will merge Salesforce's CRM data with Workday's HR info, enabling natural language communication for easier employee task completion.

OpenAI's new Rule-Based Rewards method improves AI safety without extensive human data collection. It uses simple rules to evaluate outputs, balancing helpfulness and safety in AI models.

Mistral Large 2, a new AI model, handles 128,000 words of context and many languages. It's better at coding, math, and reasoning, with less incorrect information.

Meta releases Llama 3.1: 128K context, 8 languages, 405B model. New safety tools: Llama Guard 3, Prompt Guard, CyberSecEval 3. Open-source approach.